Ablation Studies¶

To rigorously validate the architectural choices within DantinoX, we conducted extensive hyperparameter sweeps using Weights & Biases (W&B). Instead of relying on conventional wisdom, every major component—from routing penalties to attention mechanics—was empirically tested against hardware constraints and convergence stability.

The insights below are derived from analyzing the joint distribution of validation loss (val_loss), peak memory footprint (vram_gb), and execution speed (ms_per_step) across hundreds of Bayesian trials.

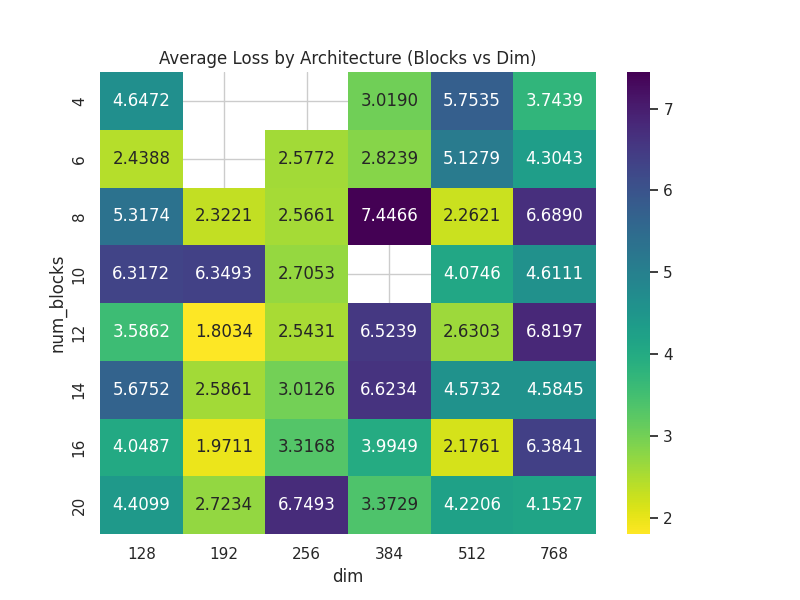

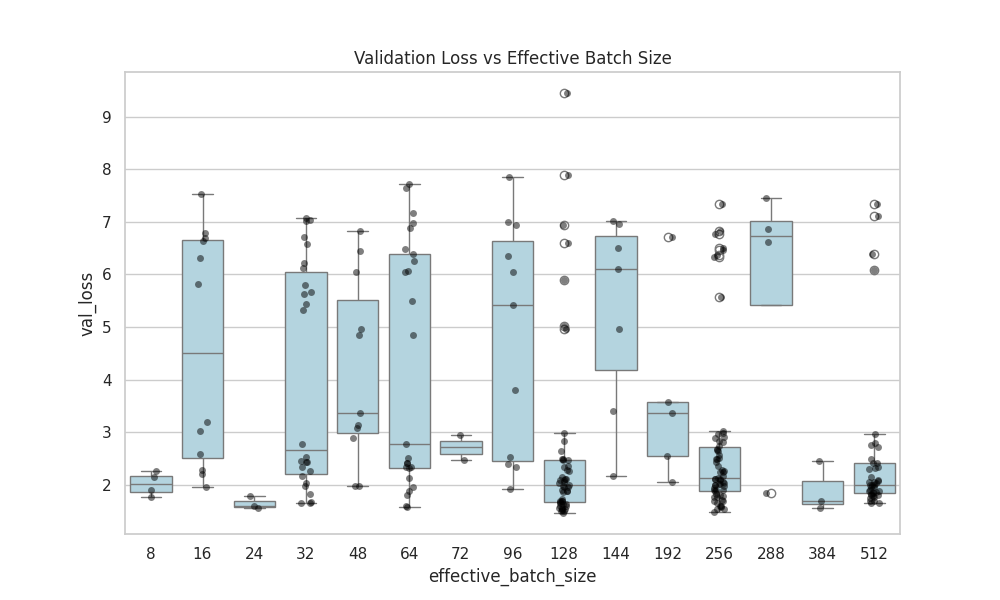

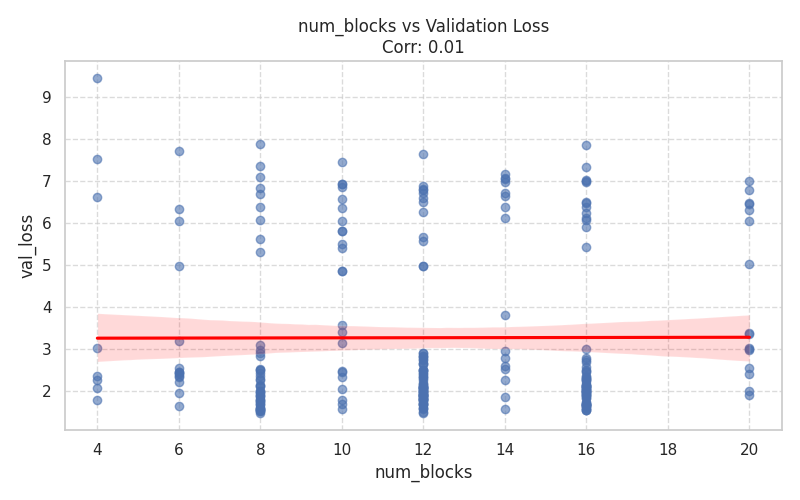

1. Model Capacity & Convergence Dynamics¶

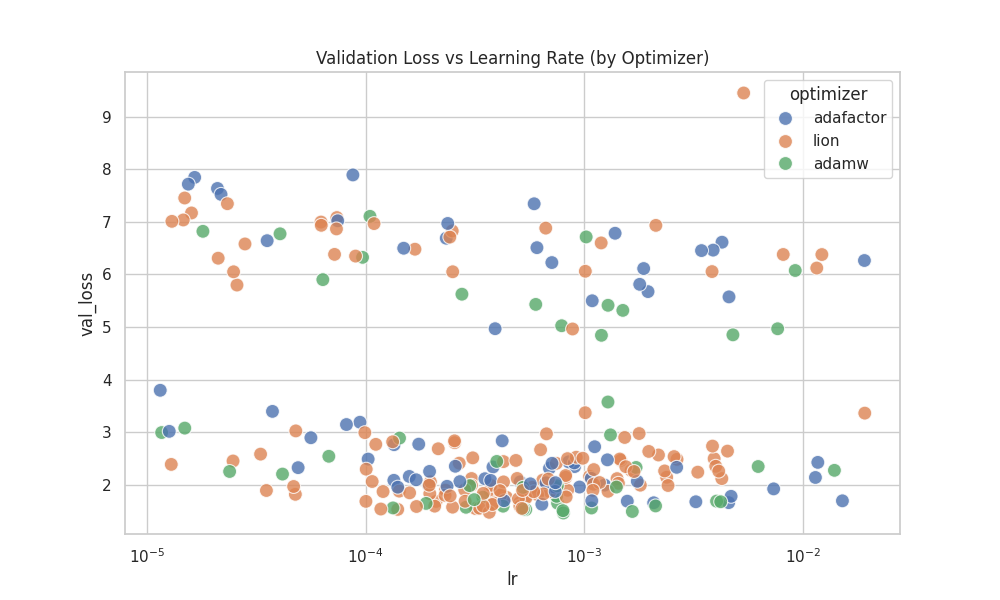

This section analyzes how fundamental model scaling laws (depth vs. width) and core training hyperparameters impact the final language modeling performance.

Depth, Width, and Batch Scaling¶

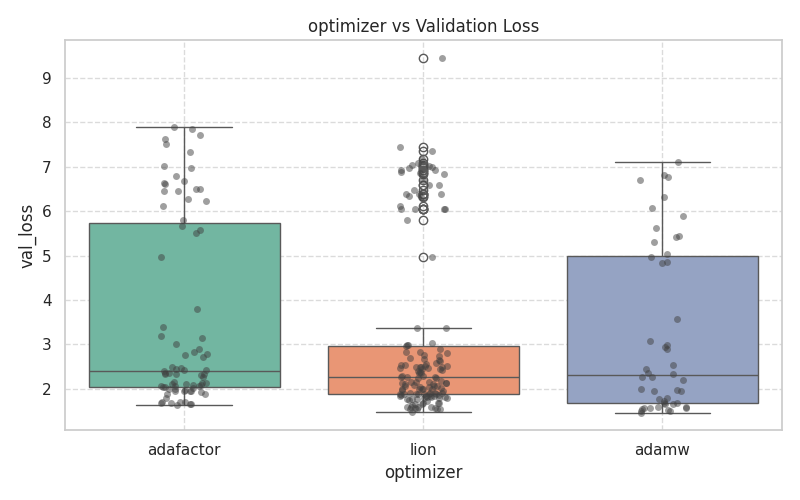

Optimizer & Learning Rate Sensitivity¶

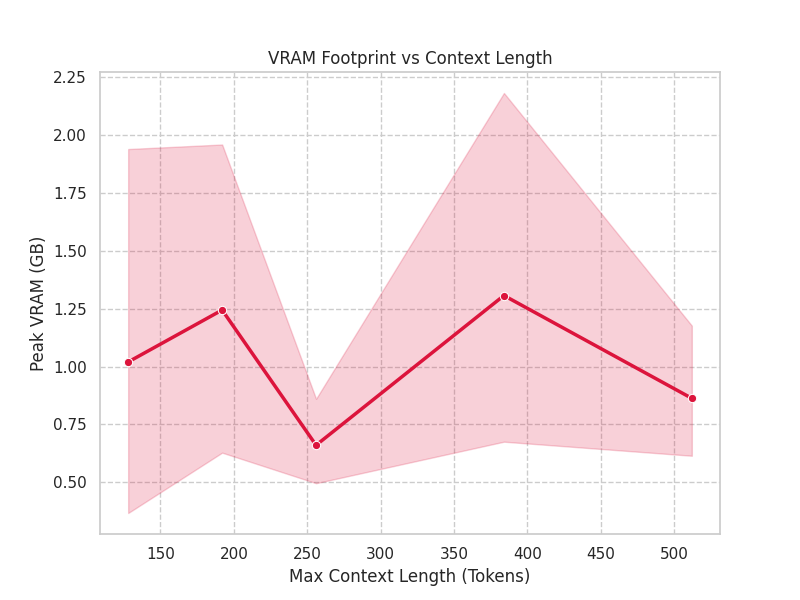

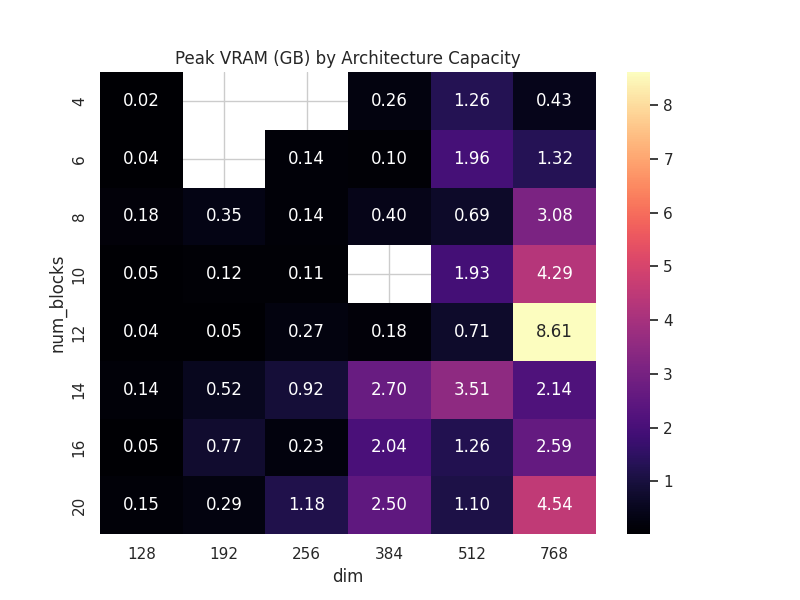

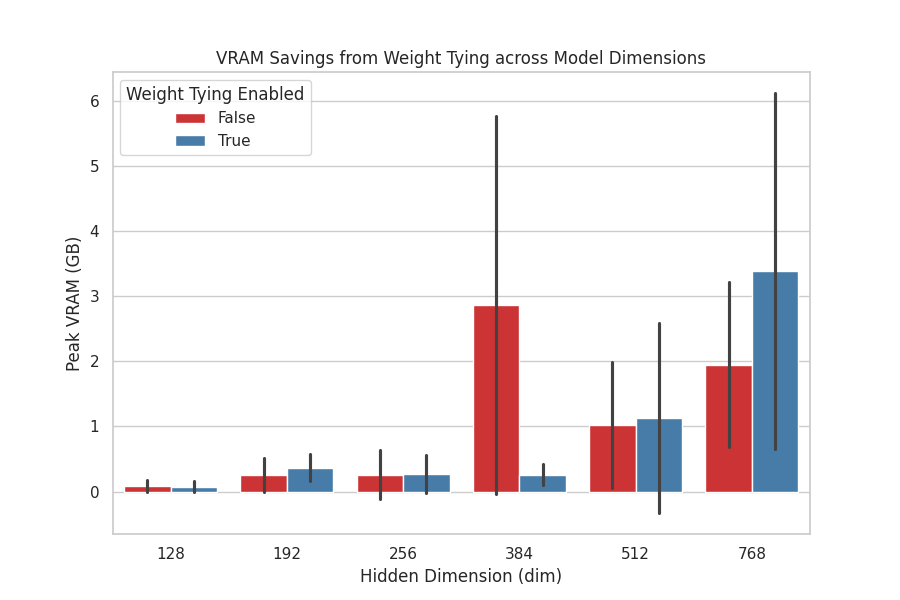

2. Architecture Specifics & Memory Efficiency¶

Modern LLM engineering is primarily memory-bound. This section proves the efficiency of the advanced architectural features implemented in DantinoX to reduce the GPU footprint.

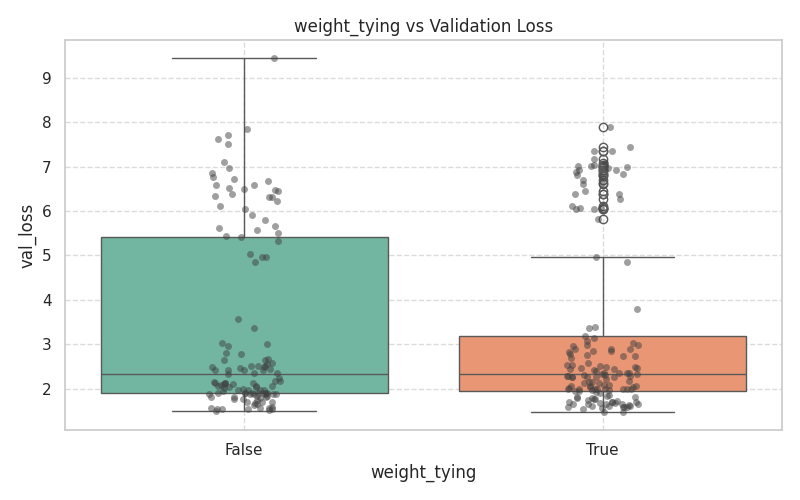

Parameter Sharing & Attention Optimizations¶

| Weight Tying VRAM Savings |

|---|

|

| Weight Tying: Empirical verification of VRAM reduction achieved by sharing the embedding matrix with the output LM head across different model dimensions. |

VRAM Scaling Laws¶

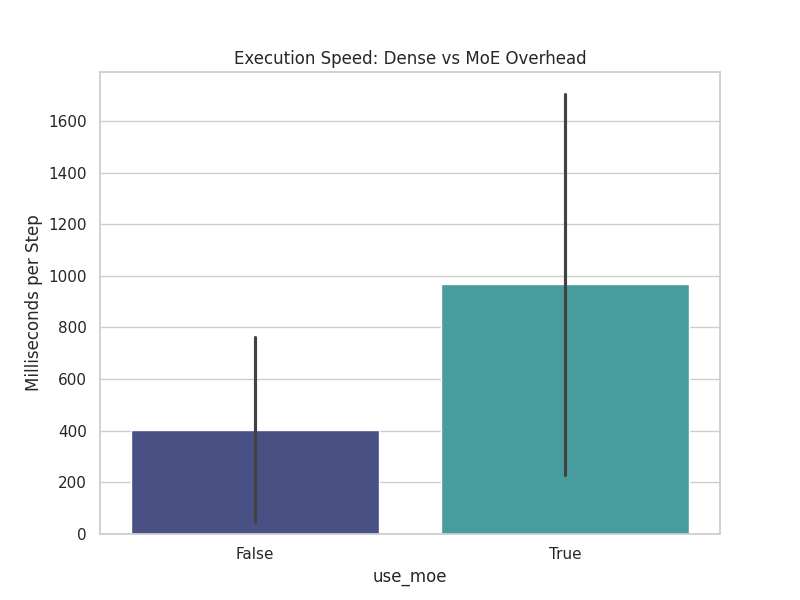

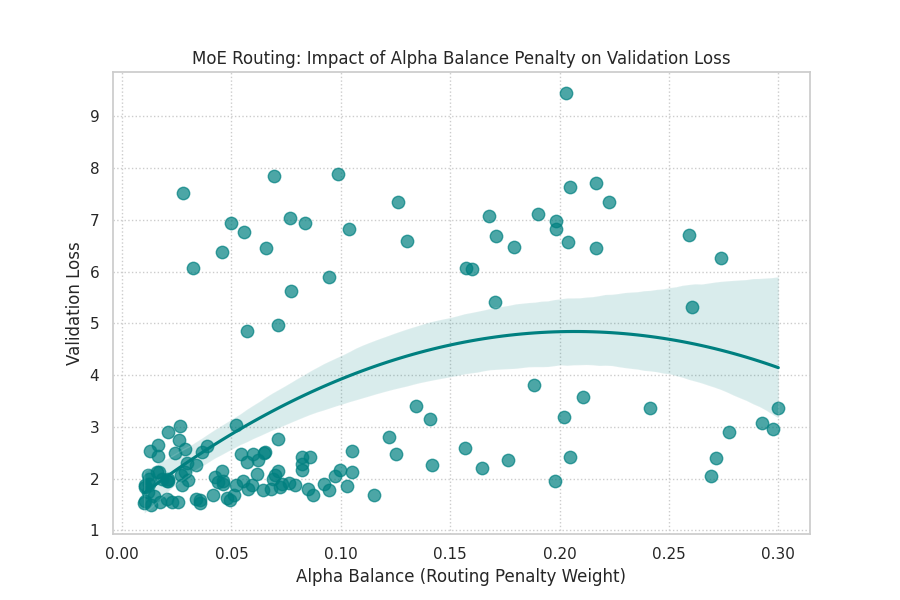

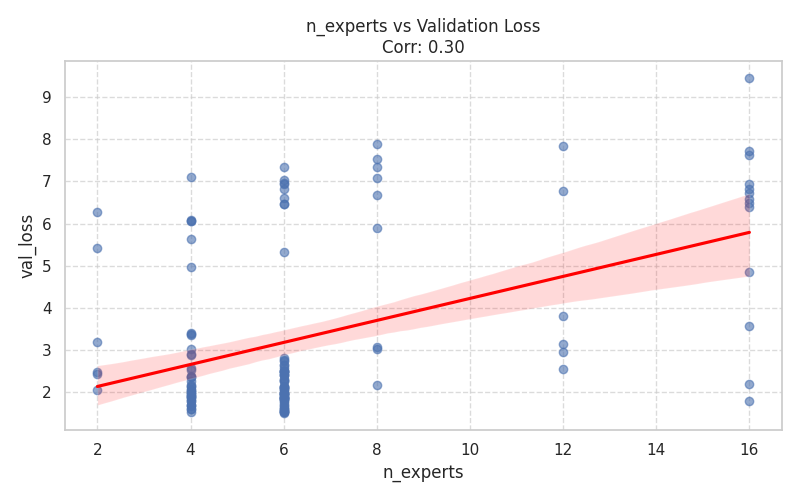

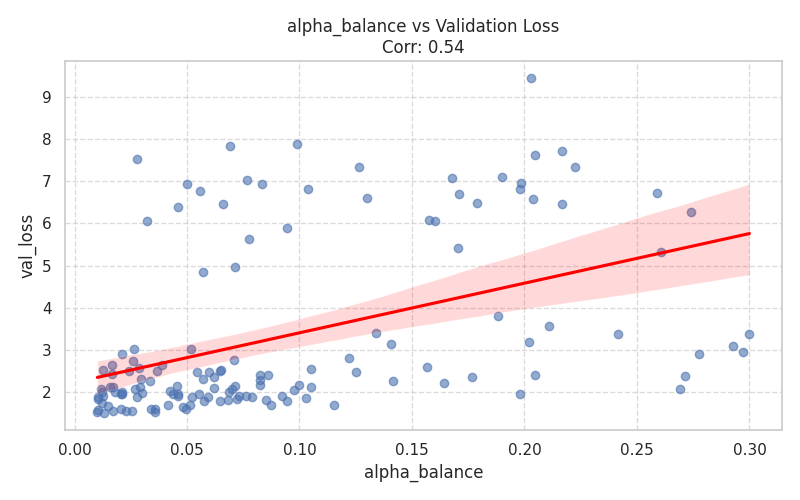

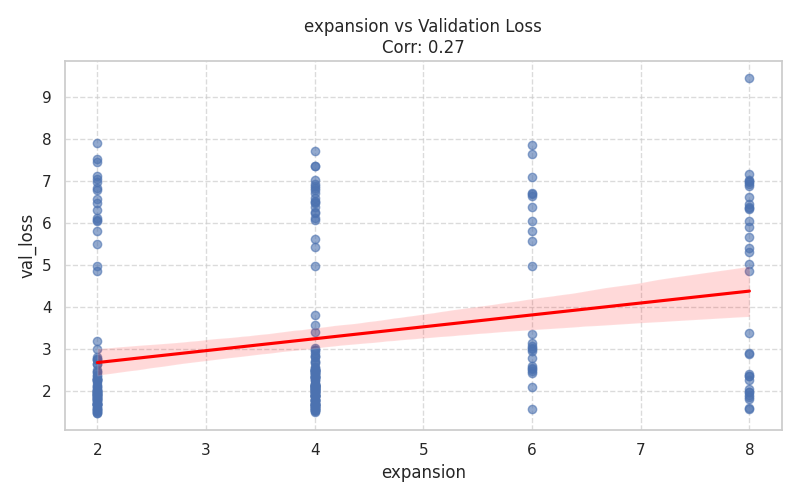

3. Sparse Mixture of Experts (MoE) Analysis¶

Implementing MoE in JAX requires careful balancing of speed overhead and routing quality. This section provides a deep dive into the performance trade-offs of the gated MLP blocks.

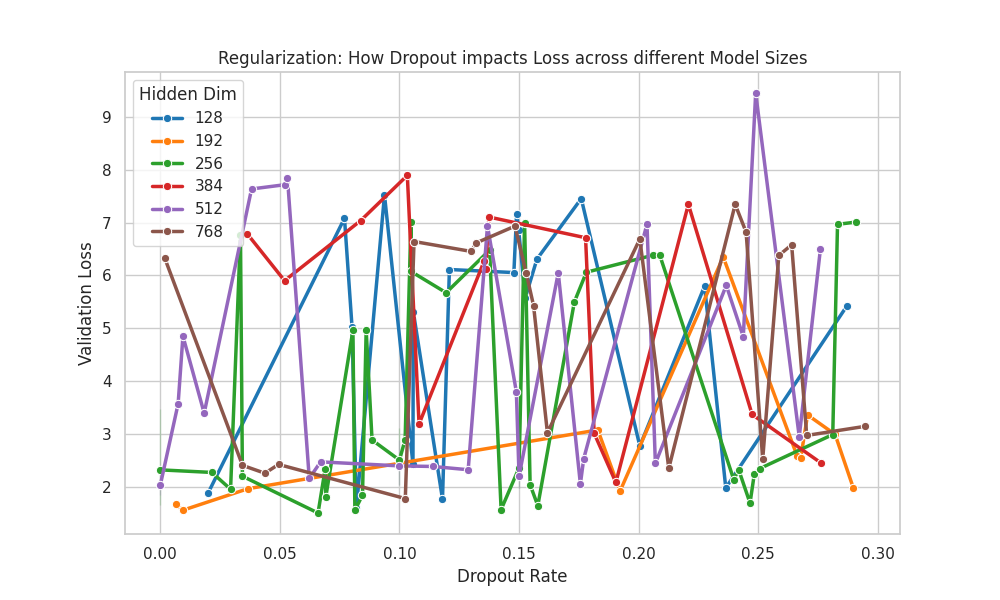

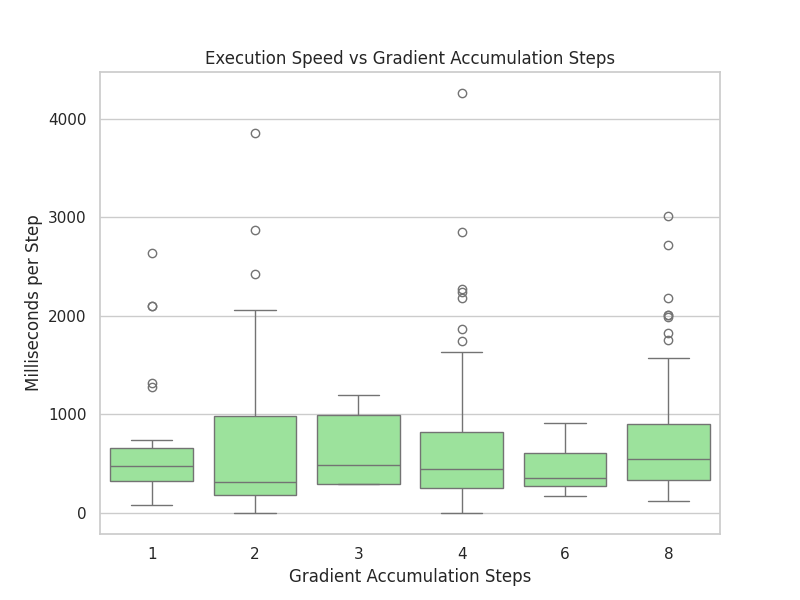

4. Regularization & Training Efficiency¶

The final section evaluates how to control overfitting and maximize the utilization of hardware resources during the training loop.

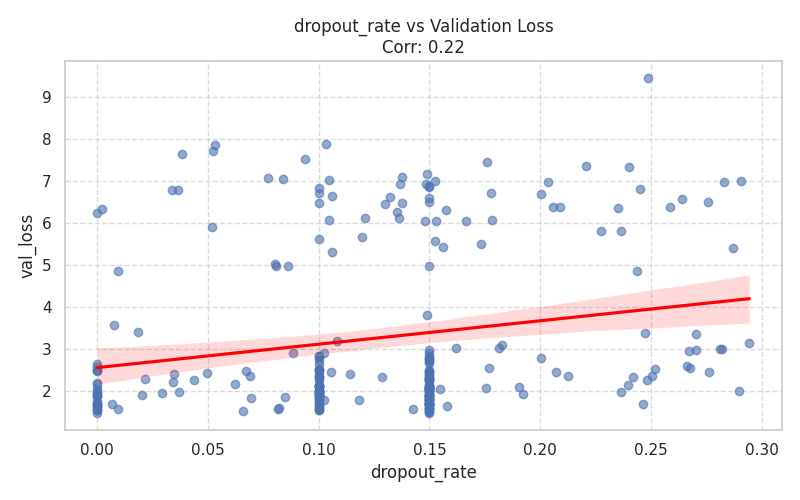

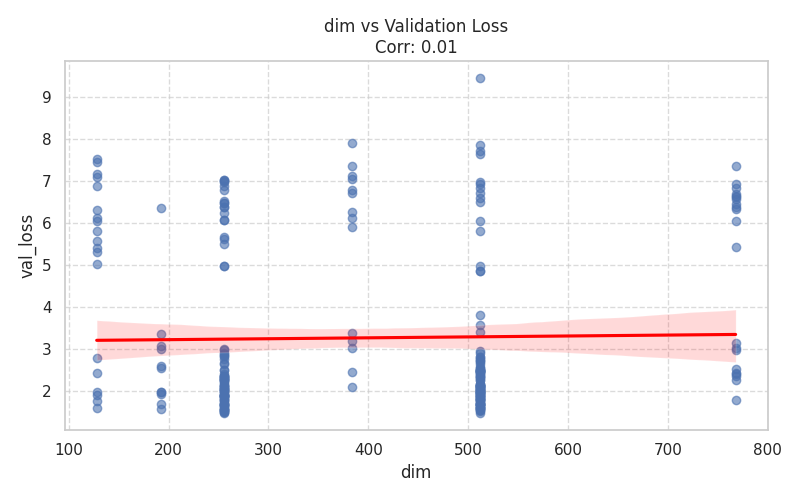

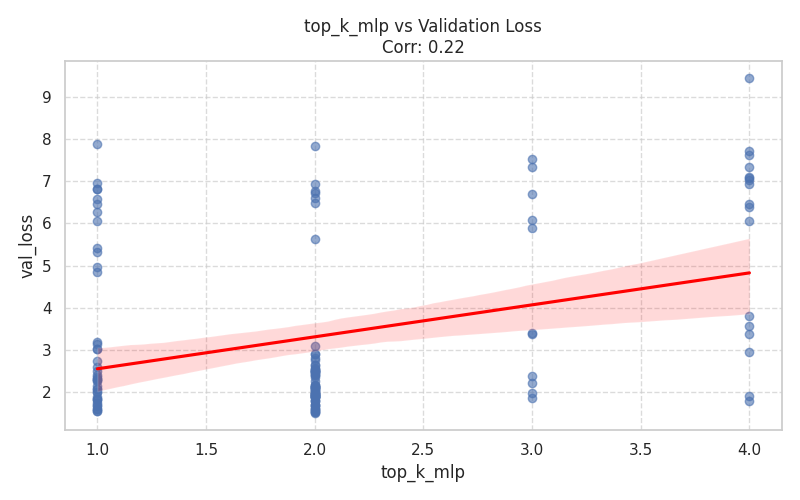

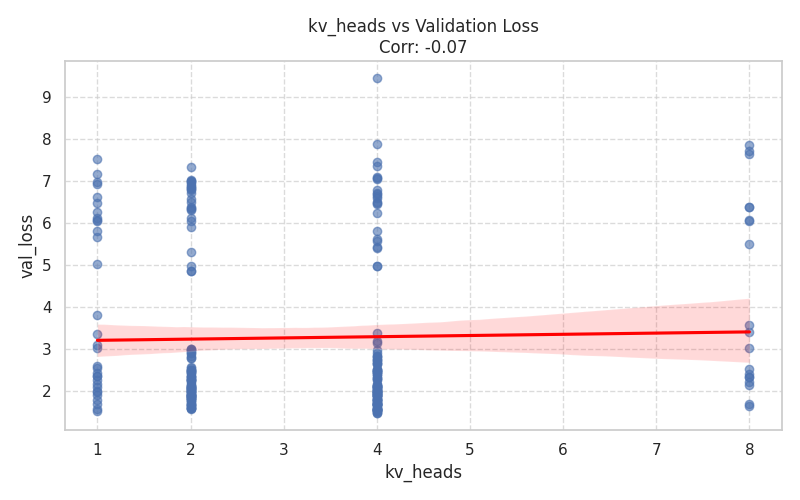

Appendix: Complete Parameter Distributions¶

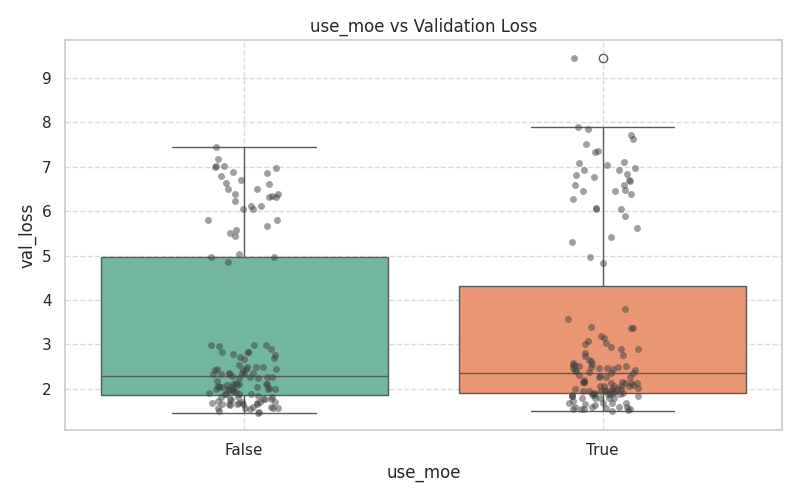

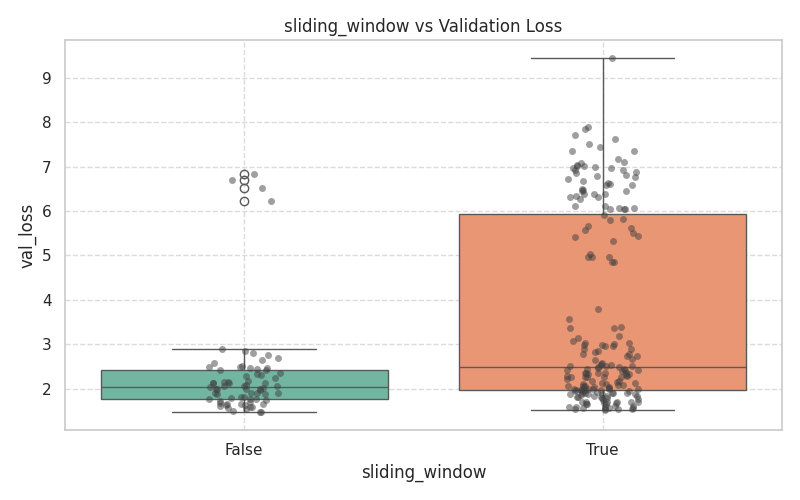

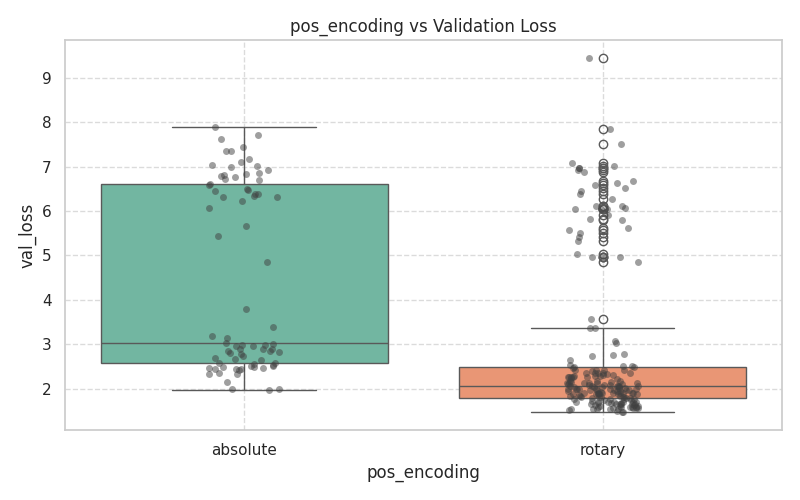

For full transparency and reproducibility, the following expandable section contains the isolated distributions of every hyperparameter swept during the Bayesian optimization process, plotted against the target validation loss.

Click to expand all Base Distributions (Boxplots & Scatter Plots)

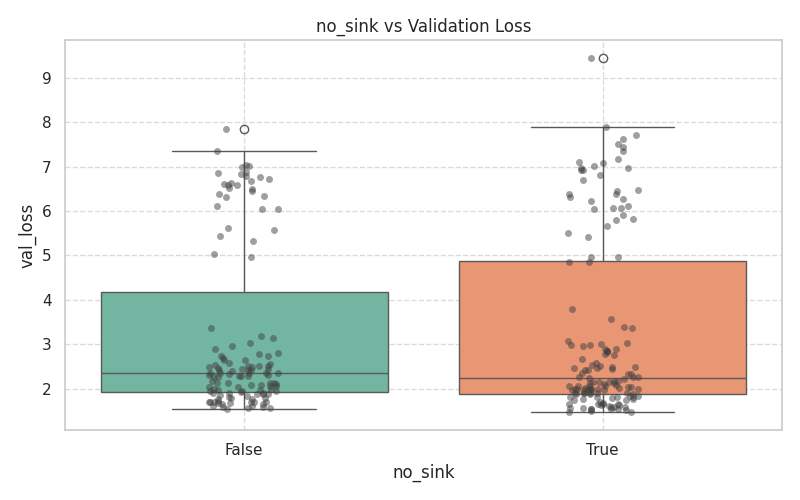

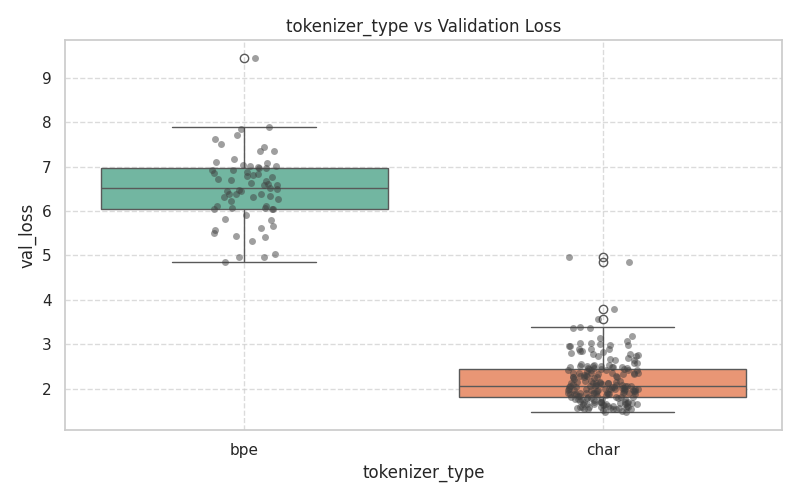

Categorical Architectural Choices (Boxplots)¶

These plots demonstrate the variance and median validation loss across boolean toggles and categorical selections.

| Core & Routing | Attention & Positional |

|---|---|

|  |

|  |

|  |

|  |

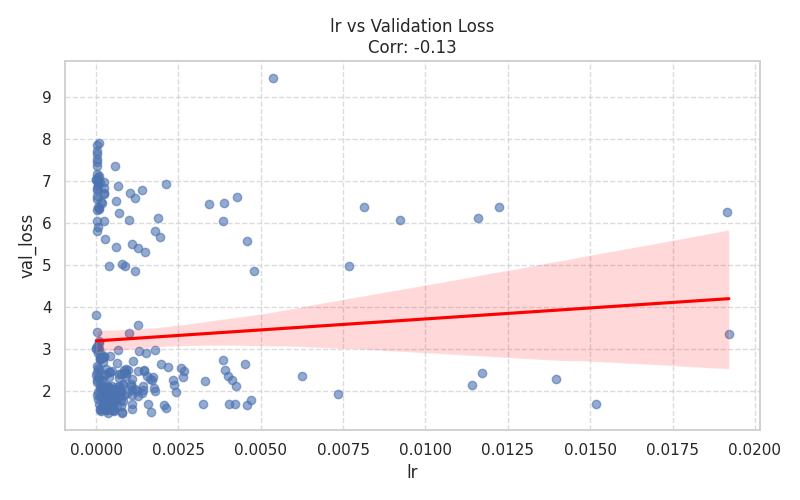

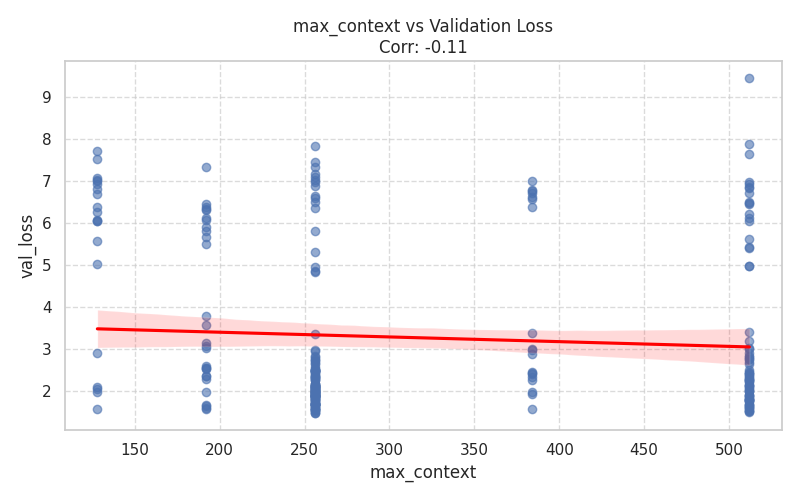

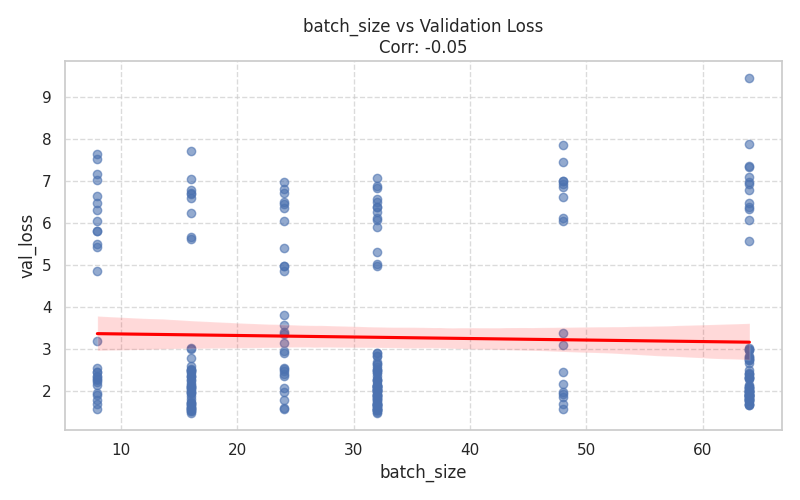

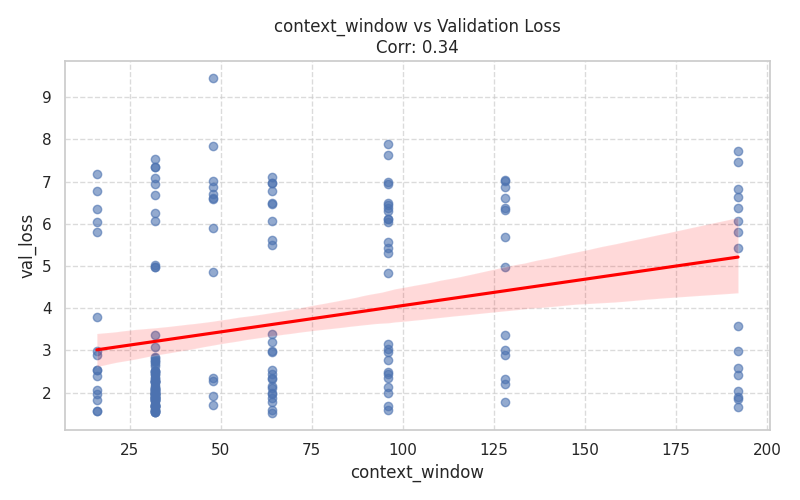

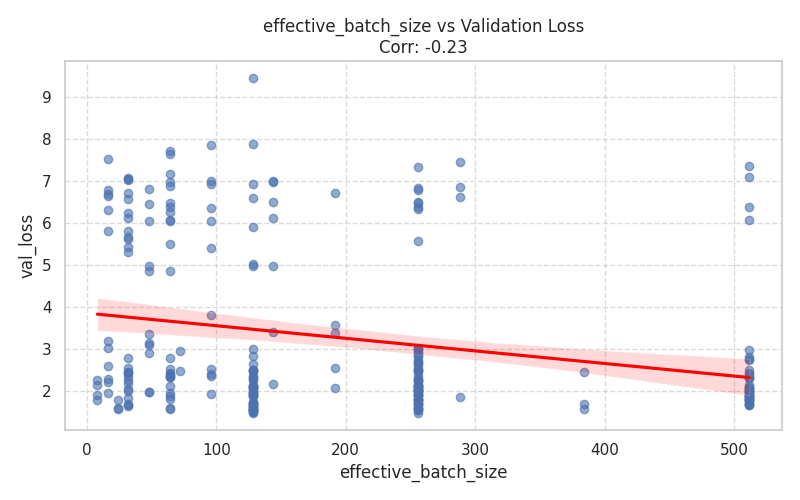

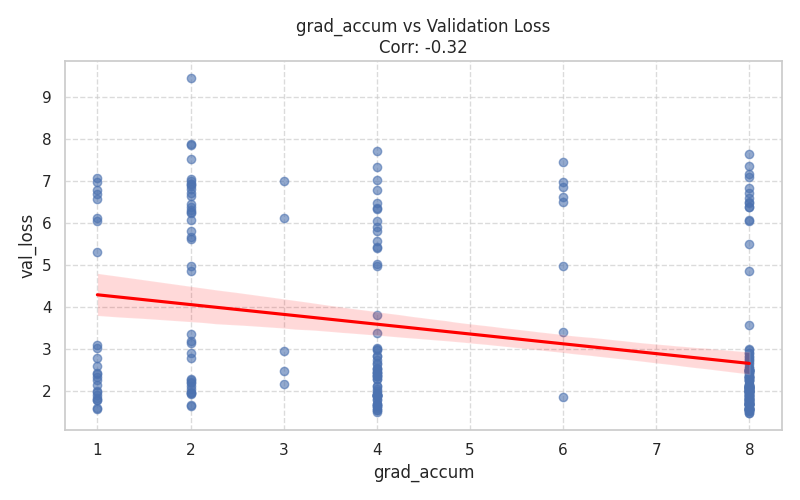

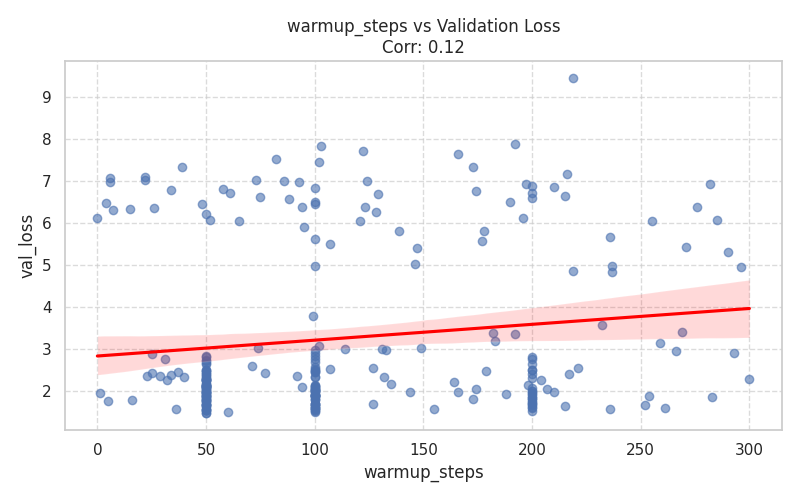

Numeric Hyperparameters (Scatter Plots)¶

These plots isolate continuous and discrete numerical values, complete with Spearman correlation trends.

| Training Dynamics | Memory & Context |

|---|---|

|  |

|  |

|  |

|  |

| Architecture Dimensions | Mixture of Experts (MoE) |

|---|---|

|  |

|  |

|  |

|